IMPACT to save 20% off today).That’s why we design and send a shiny new feature. How do we know if it is working? How do we measure and track its impact? There is there is no shortage of UX metricsbut what if we wanted to establish a simple, repeatablemeaningful and meaningful UX metric, specifically for our features? Well, let’s see how to do just that.

I heard for the first time about him TARS frame From Adrian H. Raudschl’s wonderful article on “How to measure the impact of features“. Here, Adrian highlighted how his team tracks and decides which features to focus on, and then compares them against each other in a 2×2 quadrant matrix.

It turned out to be a very useful framework for visualize the impact of UX work through the lens of business metrics.

Let’s see how it works.

1. Target audience (%)

We begin by quantifying the target audience exploring what percentage of a product’s users have the specific problem a feature is intended to solve. We can study existing or similar features that try to solve similar problems and how many users interact with them.

Target audience it’s not the same However, as function usage. As Adrian pointed out, if we know that 5% of all users use an existing Export button feature, it doesn’t mean that the target audience is 5%. More users You may have the problem that the export function is trying to solve, but can’t find it.

Question we ask: “What percentage of all users of our product have that specific problem that a new feature is intended to solve?”

2. A = Adoption (%)

Next, we measure how well we are doing “acquiring” our target audience. To do that, we track how many users actually interact. successfully with that characteristic for a specific period of time.

Us Don’t focus on CTR or session duration. there, but rather if users significantly commit to it. For example, if something indicates they found it valuable, such as sharing the export URL, the number of files exported, or the use of filters and settings.

High feature adoption (>60%) suggests that the problem had an impact. Low adoption (<20%) could imply that the problem has simple solutions that people have trusted. Changing habits also takes time, so low adoption is expected at first.

Sometimes low feature adoption has nothing to do with the feature itself, but rather where is it in the user interface. Users may never discover it if it is hidden or has a confusing label. It should be obvious enough for people to notice.

Low adoption does not always equal failure. If an issue only affects 10% of users, achieving between 50% and 75% adoption within that specific niche means the feature is a success.

Question we ask: “What percentage of active target users actually use the feature to solve that problem?”

3. Retention (%)

Next, we study whether a function is actually used repeatedly. We measure usage frequency, or specifically, how many users who interacted with the feature actually continue to use it over time. Normally, it is a strong signal for significant impact.

If a feature has a retention rate >50% (average), we can be fairly confident that it has a high strategic importance. A retention rate of 25% to 35% indicates medium strategic importance, and a retention of 10% to 20% is then of low strategic importance.

Question we ask: “Of all the users who adopted a feature in a meaningful way, how many came back to use it again?”

4. Satisfaction Score (CES)

Finally, we measure the satisfaction level that users have with that feature that we have sent. We don’t ask everyone, we only ask “retained” users. It helps us detect hidden problems that might not be reflected in the retention score.

Once users actually used a feature multiple times, we asked them how easy it was to solve a problem after using that feature, between “much harder” and “much easier than expected.” We know how we want to score.

Using TARS for Feature Strategy

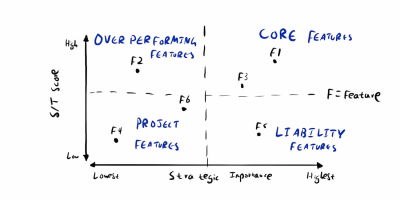

Once we start measuring with TARS, we can calculate a S÷T score — the percentage of satisfied users ÷ target users. It gives us an idea of how well a feature is performing for our intended target audience. Once we do that for each feature, we can map all the features into 4 quadrants in a 2×2 matrix.

Overperformance Features They are worth paying attention to: they have low retention but high satisfaction. They could simply be features that users don’t have to use often, but when they do, it’s extremely effective.

Responsibility Features They have high retention but low satisfaction, so perhaps we should work on them to improve them. And then we can also identify main features and project features, and talk to designers, PMs, and engineers about what we should work on next.

Conversion rate is not a UX metric

TARS does not cover the conversion rate and for good reason. As Fabián Lenz pointed outconversion is often considered the ultimate indicator of success However, in practice it is always very difficult to present a clear connection between smaller design initiatives and large conversion goals.

The truth is that almost everyone on the team is working to achieve better conversion. A rebound could be related to many different initiatives – from sales and marketing to increased web performance, seasonal effects and UX initiatives.

Of course, UX can improve conversion, but it’s not really a UX metric. Often people simply I can’t choose the product they are using. And often the desired business outcome arises from necessity and struggle, rather than trust and appreciation.

High conversion despite poor user experience

like fabian writes, high conversion rate can happen despite a bad user experience, because:

- Strong brand power attracts people,

- Aggressive but effective emergency tactics,

- The prices are extremely attractive,

- Marketing works brilliantly,

- Historical customer loyalty,

- Users simply have no choice.

Low conversion despite great user experience

At the same time, a low conversion rate can occur despite great UX, because:

- The offers are not relevant. to the audience,

- Users do not trust the brand.,

- Poor business model or high risk of failure,

- Marketing does not reach the right audience,

- External factors (price, calendar, competition).

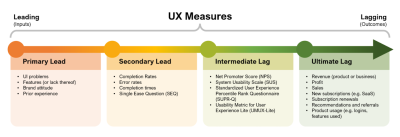

An improved conversion is positive outcome of UX initiatives. But good UX work typically improves task completion, reduces time spent on tasks, minimizes errors, and avoids decision paralysis. and there are many actionable design metrics we could use to track UX and drive sustainable success.

Concluding

Product metrics They alone do not always provide an accurate view of a product’s performance. Sales may be performing well, but users may be extremely inefficient and frustrated. However, the abandonment rate is low because users cannot choose the tool they use.

We need UX metrics to understand and improve the user experience. What I like most about TARS is that it is a simple way to connect customer usage and Customer experience with relevant product metrics.. Personally, I would extend TARS with UX-focused metrics and KPIs also, depending on the needs of the project.

Many thanks to Adrian H. Raudaschl for putting it together. And if you’re interested in metrics, I recommend following it for practical and useful guides on all that.

Learn “How to measure UX and design impact”

You can find more details at User experience strategy in 🪴 Measure the impact of design and user experience (8h), a practical guide for UX designers and leads to measure and show the impact of your UX on the business. Use code 🎟 IMPACT to save 20% off today. Jump to details.

Useful resources

Additional reading

(yk)