Over the weekend, Andrej Karpathy, the influential former Tesla AI leader and co-founder and former OpenAI member who coined the term. "vibration coding"— published in X about his new open source project, automatic investigation.

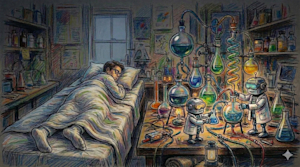

It was neither a finished model nor a massive corporate product: it was, by his own admission, a simple 630-line script. available on Github under a permissive and business-friendly MIT license. But the ambition was enormous: to automate the scientific method with artificial intelligence agents while humans sleep.

"The goal is to design your agents to make the fastest progress in research indefinitely and without their own involvement." stated in X.

The system works as an autonomous optimization circuit. An AI agent is given a training script and a fixed computing budget (typically 5 minutes on a GPU).

It reads its own source code, formulates an improvement hypothesis (such as changing the learning rate or the depth of the architecture), modifies the code, runs the experiment, and evaluates the results.

If validation loss, measured in bits per byte (val_bpb)—improves, maintains change; if not, revert and try again. In In one night run, Karpathy’s agent completed 126 experiments.which reduced the loss from 0.9979 to 0.9697.

Today, Karpathy reported that after letting the agent tune into a "depth=12" model for two days, successfully processed approximately 700 autonomous changes.

The agent found approximately 20 additive improvements that transferred seamlessly to larger models. By accumulating these changes, the "It’s time for GPT-2" metric on the leaderboard from 2.02 hours to 1.80 hours – an 11% efficiency gain on a project Karpathy believed was already well-tuned.

"Watching the agent go through this entire workflow from start to finish and on their own… it’s crazy," Karpathy commented, noting that the agent detected oversights in escalation and regularization of care that he had manually overlooked during two decades of work.

This is more than just a productivity hack; It is a fundamental change in how intelligence is refined. By automating the "scientific method" For the code, Karpathy has turned machine learning into an evolutionary process that runs at the speed of silicon rather than the speed of human thought.

And more than this, it showed the broader AI and machine learning community at X that this type of process could be applied far beyond computer science, to fields like marketing, healthcare, and, well, basically anything that requires research.

Self-inquiry spreads everywhere

The reaction was swift and viral: Karpathy’s post garnered more than 8.6 million views in the intervening two days as builders and researchers raced to scale the "Carpathian Loop".

Varun Mathur, CEO of artificial intelligence tools aggregation platform Hyperspace AI, took the single agent loop and distributed it over a peer-to-peer network. Each node running the Hyperspace agent became an autonomous researcher.

On the night of March 8-9, 35 autonomous Hyperspace network agents performed 333 completely unsupervised experiments. The results were a master class in emerging strategy:

-

Hardware Diversity as a Feature: Mathur pointed out that although H100 GPUs were used "brute force" To find aggressive learning rates, CPU-only agents on laptops were forced to get smart. These "helpless" Agents focused on initialization strategies (such as Kaiming and Xavier init) and normalization options because they could not rely on raw performance.

-

Discovery based on gossip: Using the GossipSub protocol, agents shared their earnings in real time. When a trader discovered that Kaiming seeding reduced losses by 21%, the idea spread across the network like a digital virus. Within hours, 23 other agents had incorporated the discovery into their own hypotheses.

-

Compression of history: In just 17 hours, these agents independently rediscovered machine learning milestones (like RMSNorm and linked embeddings) that took human researchers at labs like Google Brain and OpenAI nearly eight years to formalize.

Run 36,500 marketing experiments each year instead of 30

While ML purists focused on loss curves, the business world saw a different kind of revolution. Eric Siu, founder of the advertising agency Single Grainapplied automatic research to the "Experiment loop" of marketing.

"Most marketing teams run about 30 experiments a year." Siu wrote in X. "The next generation will have more than 36,500 units. Easily." Continuous:

"They will do experiments while they sleep. Today’s marketing teams run 20 to 30 experiments a year. Maybe 52 if they are “good.” New landing page. New advertising creativity. Maybe a subject line test. that is considered "data-driven marketing."

But the next generation of marketing systems will perform more than 36,500 experiments per year."

Siu’s framework replaces the training script with a marketing asset: a landing page, ad creative, or a cold email. The agent modifies a variable (the subject line or CTA), implements it, measures the "positive response rate," and keep it or discard it.

Siu argues that this creates a "proprietary map" of what resonates with a specific audience: a moat built not with code, but with a history of experiments. "The companies that win won’t have better marketers," he wrote, "They will have faster experiment cycles.".

Community discussion and ‘messing up’ the validation set

Despite the fervor, the GitHub Discussions revealed a community struggling with the implications of such rapid, automated progress.

The trap of over-optimization: Researcher alexistual raised a poignant concern: "Aren’t you worried that launching so many experiments will eventually “mess up” the validation set?". The fear is that with enough agents, the parameters will be optimized for the specific quirks of the test data rather than general intelligence.

The meaning of profits: User Samionb questioned whether a drop from 0.9979 to 0.9697 was really notable. Karpathy’s response was characteristically direct: "All we are doing is optimizing performance per compute… these are real and substantial gains."

The human element: In X, user sorcererHead of Growth at crypto platform Yari Financedocumented his own overnight run on a Mac Mini M4, noting that while 26 of 35 experiments failed or failed, the seven that were successful revealed that "the model improved by becoming simpler".

This idea that less is often more was achieved without a single human intervention.

The future: curiosity as a bottleneck

The launch of automated research suggests a future of research in all domains where, thanks to simple AI instruction mechanisms, the role of the human being changes from "experimenter" to "experimental designer."

As tools like DarkMatter, Optimization Arena, and NanoClaw emerge to support this swarm, the bottleneck to AI progress is no longer the "meat computer" (Karpathy’s description of the human brain’s ability) to encode: It is our ability to define the limitations of search.

Andrej Karpathy has once again changed the vibe. We are no longer limited to coding models; We are planting ecosystems that learn while we sleep.