When Brett Levenson left Apple in 2019 to lead business integrity at Facebook, the social media giant was in the midst of crisis. Cambridge analytics radioactive dust. At the time, he thought he could simply solve Facebook’s content moderation problem with better technology.

He quickly realized that the problem went beyond technology. Human reviewers were expected to memorize a 40-page policy document that had been automatically translated into their language, he said. They then had about 30 seconds per flagged content to decide not only whether that content violated the rules, but also what to do about it: block it, ban the user, limit the spread. Those quick calls had only “slightly better than 50% accuracy,” according to Levenson.

“It was like a coin flip, whether human reviewers could actually address the policies correctly, and this was many days after the damage had already occurred,” Levenson told TechCrunch.

That kind of delayed and reactive approach is not sustainable in a world of agile and well-funded adversary actors. The rise of AI chatbots has only exacerbated the problem, as failures in content moderation have led to a number of high-profile incidents, such as chatbots providing teenagers with self harm guide either AI generated images evading security filters.

Levenson’s frustration led to the idea of “policies as code,” a way to turn static policy documents into executable, updateable logic closely tied to law enforcement. That idea led to the founding of Moonbounce, which announced it had raised $12 million in funding on Friday, TechCrunch has learned exclusively. The round was co-led by Amplify Partners and StepStone Group.

Moonbounce works with businesses to provide an additional layer of security wherever content is generated, whether by a user or AI. The company has trained its own large language model to examine a customer’s policy documents, evaluate the content at runtime, provide a response in 300 milliseconds or less, and take action. Depending on the customer’s preferences, that action could look like Moonbounce’s system is slowing down distribution while the content awaits human review later, or it could block high-risk content outright.

Today, Moonbounce serves three main verticals: platforms that deal with user-generated content, such as dating apps; Artificial intelligence companies that create characters or companions; and AI image generators.

Technology event

San Francisco, CA

|

October 13-15, 2026

Moonbounce supports more than 40 million daily reviews and serves more than 100 million daily active users on the platform, Levenson said. Its clients include AI companion startup Channel AI, image and video generation company Civitai, and character role-playing platforms Dippy AI and Moescape.

“Security may actually be a benefit of the product,” Levenson told TechCrunch. “It just never has been because it’s always something that happens later, not something you can actually build into your product. And we see that our customers are finding really interesting and innovative ways to use our technology to make security a differentiator and part of their product story.”

Tinder’s head of trust and safety recently explained how the dating platform uses these types of LLM-based services to achieve a 10x improvement in detection accuracy.

“Content moderation has always been an issue that plagues large online platforms, but now that LLMs are at the center of every application, this challenge is even more daunting,” said Lenny Pruss, general partner at Amplify Partners, in a statement. “We invested in Moonbounce because we envision a world where real-time, objective barriers become the backbone of all AI-mediated applications.”

AI companies face growing legal and reputational pressure after chatbots have been accused of pushing teenagers and vulnerable users towards suicide and image generators such as xAI’s Grok have been used to create non consensual nude images. It is clear that the internal guardrails are failing and it is becoming an issue of liability. Levenson said AI companies are increasingly looking outside their own walls for help to shore up security infrastructure.

“We are a third party sitting between the user and the chatbot, so our system is not inundated with context like the chat itself is,” Levenson said. “The chatbot itself has to remember, potentially, tens of thousands of previous tokens… All we’re worried about is enforcing the rules at runtime.”

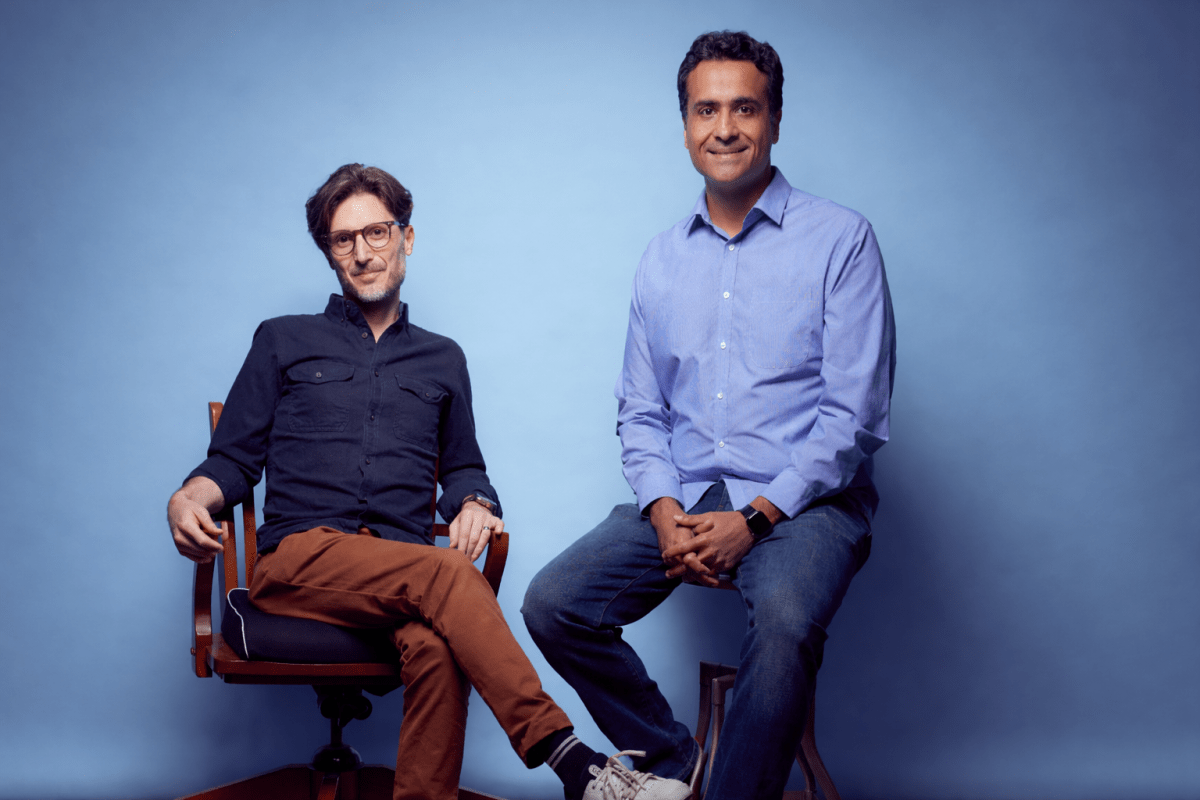

Levenson runs the 12-person company with former Apple colleague Ash Bhardwaj, who previously built large-scale cloud and AI infrastructure across the iPhone maker’s core offerings. Their next focus is a capability called “iterative management,” developed in response to cases like the 2024 suicide of 14-year-old Florida boy who became obsessed with a Character AI chatbot. Instead of an outright denial when harmful topics arise, the system would intercept the conversation and redirect it, modifying prompts in real time to prompt the chatbot toward a more active supportive response.

“We’re hoping to add to our actions toolkit the ability to steer the chatbot in a better direction to essentially take the user’s message and modify it to force the chatbot to be not just an empathetic listener, but a helpful listener in those situations,” Levenson said.

Asked if his exit strategy involved an acquisition by a company like Meta, bringing his work in content moderation full circle, Levenson said he recognizes how well Moonbounce would fit into his former employer’s stack, as well as his own fiduciary duties as CEO.

“My investors would kill me for saying this, but I would hate to see someone buy us out and then restrict the technology,” he said. “Like, ‘Okay, this is ours now and no one else can benefit from it.'”