OpenAI is we present the trusted contacta new security feature in ChatGPT that users can choose to activate. Allows adult users to designate someone to receive a notification if the system or reviewers notice signs of serious risk of self-harm during conversations. The feature is available to users 18 years and older worldwide and 19 years and older in South Korea.

Trusted Contact expands existing parental controls and security notifications for linked teen accounts and offers a similar option for all adult users on a voluntary basis.

How ChatGPT’s trusted contact feature works

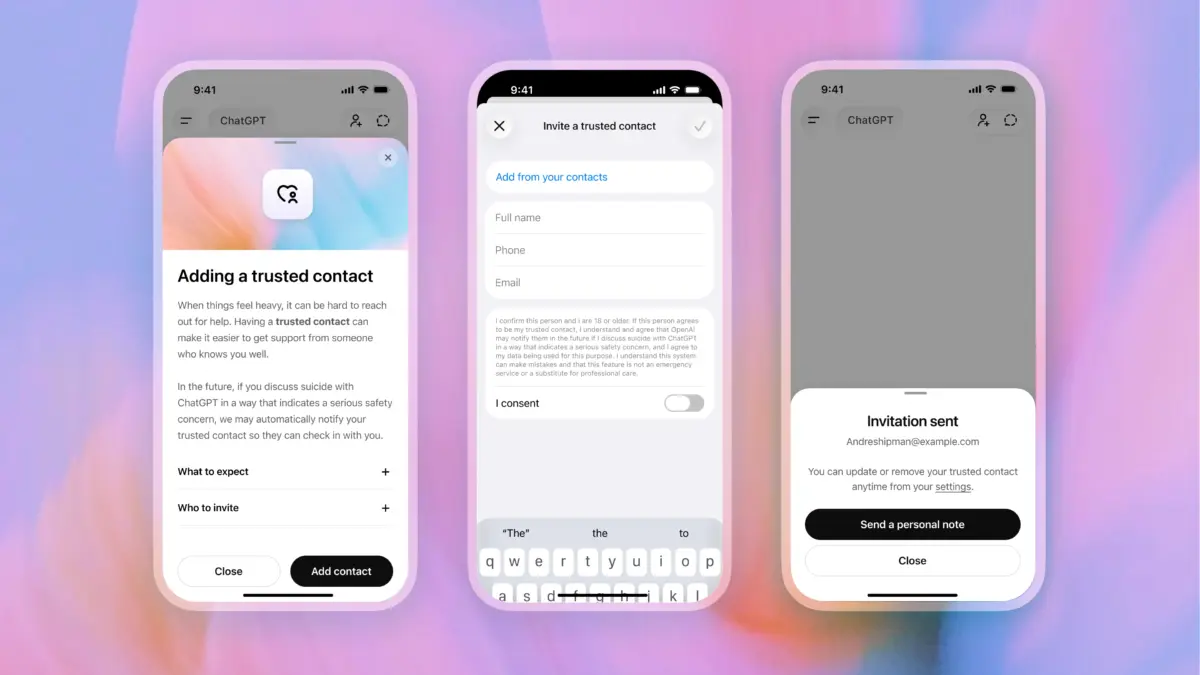

The feature involves several steps before it is activated:

- The user adds an adult as a trusted contact through the ChatGPT settings.

- The designated contact receives an invitation explaining the position and must accept it within one week.

- If the contact declines, the user has the option to nominate a different adult.

- When automated monitoring detects a potential serious self-harm issue, ChatGPT notifies the user that the trusted contact can be informed and offers conversation topics to communicate with.

- A small team of trained reviewers evaluates the situation before sending any notification.

- If reviewers determine that a serious security issue exists, ChatGPT sends the trusted contact a brief notification via email, text message, or in-app alert if they have a ChatGPT account.

OpenAI says the company aims to review security notifications within one hour. Users can update or delete their trusted contact at any time, and trusted contacts can also delete themselves through the help center.

What ChatGPT shares with a trusted contact and how it fits into the OpenAI security system

Notifications are intentionally limited. They briefly explain why self-harm was brought up in a potentially concerning way, encourage consultation with the user, and provide a link to expert guidance on how to handle sensitive topics. Chat details and transcripts are not shared with the trusted contact, even when a notification is sent.

Trusted Contact adds an extra layer of protection alongside existing safeguards rather than replacing them. ChatGPT still encourages users to contact emergency services, crisis helplines, or mental health professionals when necessary. The system is designed to reject requests related to suicide or self-harm and to guide users to local crisis resources.

OpenAI says the feature was developed with feedback from its Global Physician Network, which includes more than 260 licensed physicians in 60 countries, as well as its Wellness and AI Expert Council and external organizations such as the American Psychological Association.

Trusted Contact is being phased in and OpenAI has not announced when all eligible accounts will gain access.