Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Almost everything conventional artificial intelligence tools It lives in the cloud and the way you use them is pretty simple too. Simply type your message or command, transmit it to the OpenAI, Google or Anthropic servers where the most advanced models run, and get your response. While that workflow is certainly convenient, it also means that your directions, documents, and personal information are sent to third-party servers. Additionally, there is a constant dependence on Internet connectivity. Most of the time it’s not a big hassle, but if your internet connection is spotty or you’re traveling, it can be a pretty serious annoyance.

As a lifelong repairman, I wanted something different. I wanted an AI assistant that would run on my own network, on hardware that I had full control over, and most importantly, that didn’t require a subscription to unlock full functionality. That curiosity led me to build a small local AI setup using Ollama and Open WebUI on a $200 mini PC. The idea was simple: run Be to handle the Local LLMs and expose them using Open WebUI, which provides a very familiar browser-based interface.

Having used it for a while, I’m amazed at how practical the setup is for solving real problems in everyday use.

Ollama dramatically simplifies setting up local AI

Get started with a single command

The biggest problem with local AI has been the setup process. It’s not particularly difficult, but I would describe it as tedious. Running language models often means dealing with dependency conflicts and manual configuration. Ollama drastically simplifies that entire process. Ollama works as a local runtime for great language modelspackaging them in a way that makes downloading and launching them extremely easy. Simply install Ollama and select the models you want to try.

Once Ollama is up and running, a single command downloads an optimized version and starts running it locally. Ollama also exposes a local API that allows other tools to communicate with the model as if it were a remote AI service. I have used this API to integrate Ollama with other self-hosted tools like karaoke before. That little detail also turns my mini PC into something closer to a full AI server.

Now, let’s be clear: the hardware itself is not particularly powerful. I’m using a small x86 mini PC with a modest processor and only 16 GB of RAM and no dedicated GPU. But for the type of use cases I’m looking at, that hasn’t been an impediment. The mini PC can handle modern models reasonably well. Especially, optimized models like Llama 3 and Mistral work well in this environment. Using Ollama for the task is attractive because it makes changing models just as easy. In case one doesn’t work the way I like, I can delete it and download another one with a simple command.

Open WebUI turns a local model into a useful wizard

A browser-based interface makes local AI accessible to all devices

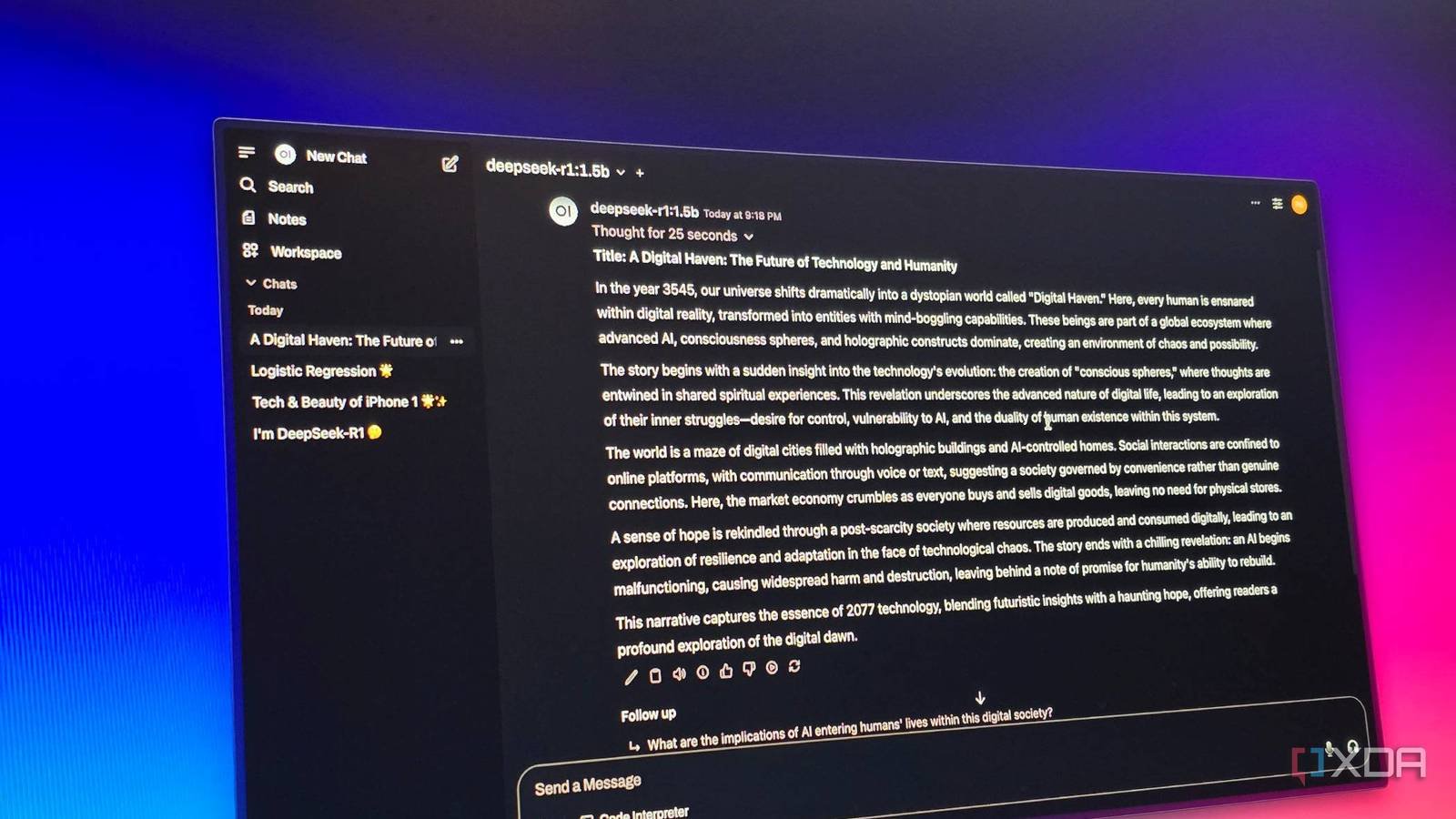

As easy as Ollama is to run a local LLM on your machine, it doesn’t really provide a simple interface for using the LLM. That’s where Open web user interface steps in by providing a complete chat interface on top of Ollama. It runs as a web application and connects directly to the models running on your system. Once set up, it automatically detects available models and allows me to interact with them through a browser window, which is a very similar experience to using a trading model like ChatGPT or Gemini.

You can open a tab, choose a model from the list and start writing your message. The model responds within a threaded conversation and their chat history remains available for later reference. Precisely how things work with commercial solutions. Switching between models only requires one click, and it’s specifically this interface that makes the setup really useful as an everyday solution.

Since the LLM runs locally on my mini PC, I can access the AI assistant from any device on my network. As long as it’s on my network, I can access it from my desktop, laptop, or even my phone without needing to install any additional software.

This setting also gives me the benefit of privacy. The data never really leaves my network because the model runs right there on my own hardware. Of course, performance varies quite a bit depending on which model you’re running, and some tasks like imaging are impossible on my humble mini PC, but as long as you keep your expectations in check, the smaller models feel fast enough.

It can’t replace cloud AI, but a small on-premises AI server is still worth it

Between Ollama and Open WebUI, it’s amazing how well this stack has integrated into my workflow. The mini PC runs in the background and acts as a small AI host that can be accessed by all devices. Once everything is set up, very little maintenance or ongoing setup is required unless you download new models.

Let’s face it: this setup is not a complete replacement for AI in the cloud. AI in the cloud It is significantly faster and capable of performing tasks like image generation and video generation that cannot be done with local models, especially on a mini PC. But for everyday tasks like brainstorming, grammar correction, and summaries, you have a private assistant always available and completely on-site.