I fully recognize that my computing habits are not normal, but I use all the major operating systems and some of the more specialized ones on a daily basis, and I break something almost every day. Sometimes that is windows 11like how I recently broke my user account by updating the firmware on an Nvidia GPU and had to reinstall it from scratch. Other times, it’s systemd or Grub that breaks Linux, because it’s always one of those two.

It could also be the firmware on my networking stack or the UEFI on my laptop, but everything breaks at some point. Usually that means figuring out how to fix it myself, which is both rewarding and frustrating, but often faster than customer support would be. The common thread is that every operating system problem is recoverable, even if that means re-imaging or reinstalling, and that the only unrecoverable computer problem is when the backup solution fails.

Breaking my systems makes me a better manager

No, really, listen to me.

They always say that you learn more from failures than from successes, and that is very true in the home laboratory. But getting to that point can be frustrating, and over the years I’ve learned coping methods to simplify problem-solving when it arises. Everything from my network up is monitored.and the systems that do the monitoring just do it, so they’re available when things go down.

With the change to systemd on most major Linux distributionsThat’s where the troubleshooting begins when those installations start to get weird. I have found that Windows deflation usually ends in tears.and I prefer to be careful what I install and have daily backup images to fall back on if something goes wrong.

My The smart home has intelligently designed automations.and only a few at a time, so that any problem is diagnosable. Honestly, that’s been easier now that I have a local LLM to help. It may be a little slow for some tasks, but figuring out YAML or Jinja syntax and why Home Assistant doesn’t do what I expect is a task it excels at.

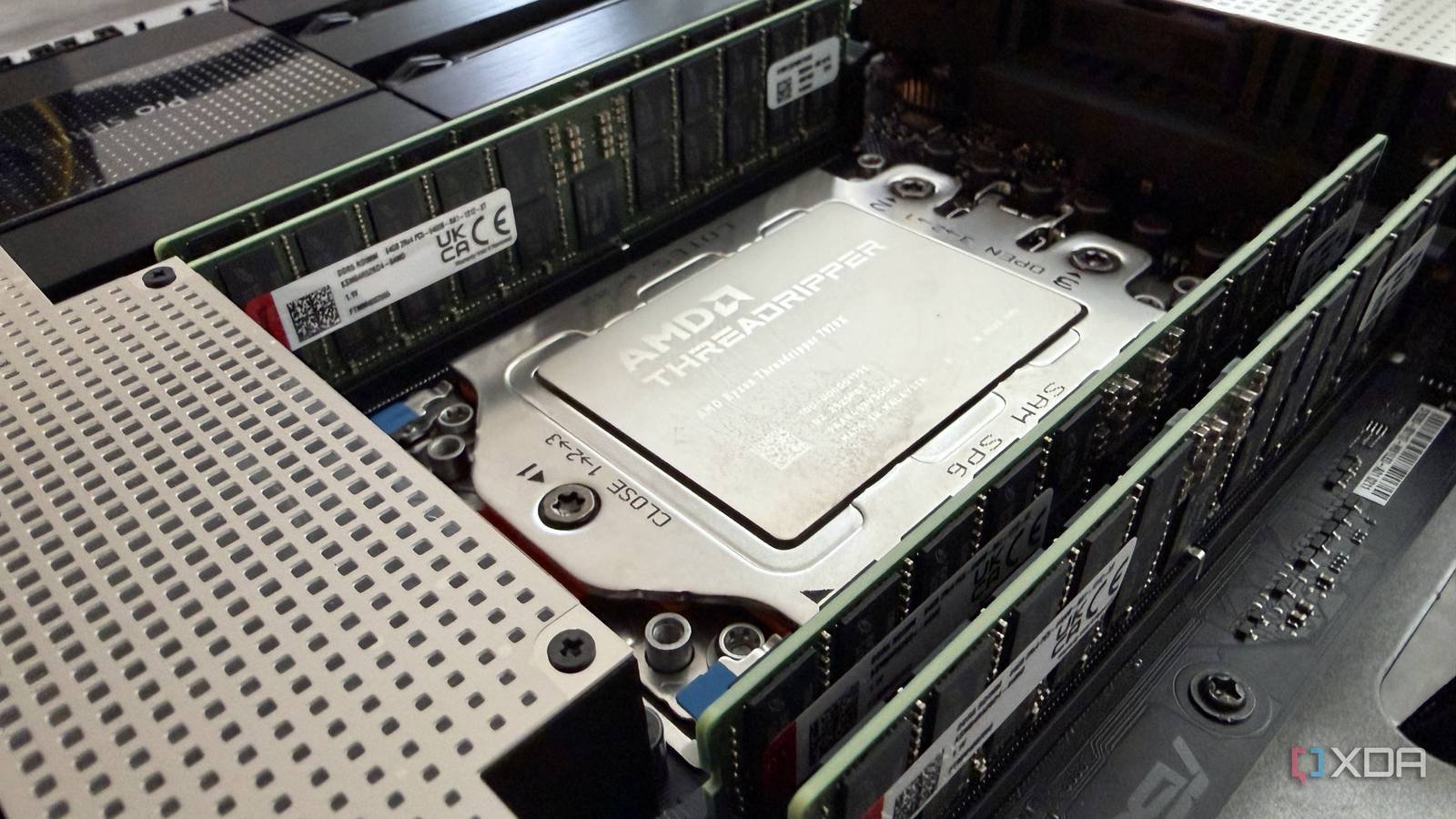

Virtualization helps

Being able to run operating systems as virtual machines gives me a greater sense of security. The networking is handled from the hypervisor and is software defined, so I can get it back easily. Boot problems are usually misconfigurations in files that I can easily access, modify, and get back up and running without having to search for active USBs or reboot the entire system. And, well, if I can’t fix it, I can recreate the VM from a new image and get it working again quickly. Sometimes I do that instead of troubleshooting, depending on how much time I have to do things.

Having a recovery mode is key

Separate management layers FTW

Whether it’s remote access or a way to manage my devices without leaving my desk, the ability to reset things without physical access is part of the success of my “break things, fix them later” mentality. KVM over IP is one of the most important parts of this, as it essentially gives me a second computer in front of my server that can impersonate me.

But it’s also about ensuring that I can still access parts of my home network if I break a configuration on the switches or implement incorrect firewall rules. Normally I would need physical access to the console to fix this, but having a management VLAN that isn’t used for any other traffic means I’ll never be left out of my networking stack.

And always stay on top of backups

We all want to have a 3-2-1 backup strategy implementedbut that doesn’t mean backing up everything everywhere. My important data is backed up to local storage on a dedicated NAS, and then the really important data is saved in various places in the cloud and on Backblaze, so there’s actually more than one off-site backup in case I need it. This also protects me from problems by relying on a single backup point, although I must confess that I don’t test my backups as often as I should.

The data from my home lab is a different story: it’s used to report changes to the infrastructure, so it doesn’t need to be kept for as long. The most important data are the manual and other automated systems I use to spin up virtual machines quickly and consistently, and to reset network configurations to known good ones when I invariably break something in the DNS. It’s not always DNS, but it might as well be with how often it happens, and it’s a reminder that almost every electronic device in your home runs an operating system of some kind that can crash.

I push my systems to the limit in Dev, so my Prod systems are pristine

Look, things are going wrong in my home lab, and that’s the point. I have oversized many aspects for disaster recovery, because the biggest disaster is the person writing the code. Yes, that’s me, and I’ll happily break 5000 things in Dev, so as not to break 5000 on the systems with my important data. Once things break and I know how to recover from them, that learning is built into the machines I don’t experiment with, and everything runs a little better as a result.