Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Allow “I don’t know,” ask for quotes, and use direct quotes to cut off Claude’s hallucinations.

A Redditor spotted them in official Anthropic documents, and these simple pointers improve factual accuracy.

Instructions reduce creativity rates, so it’s best to make a switch that turns them on and off.

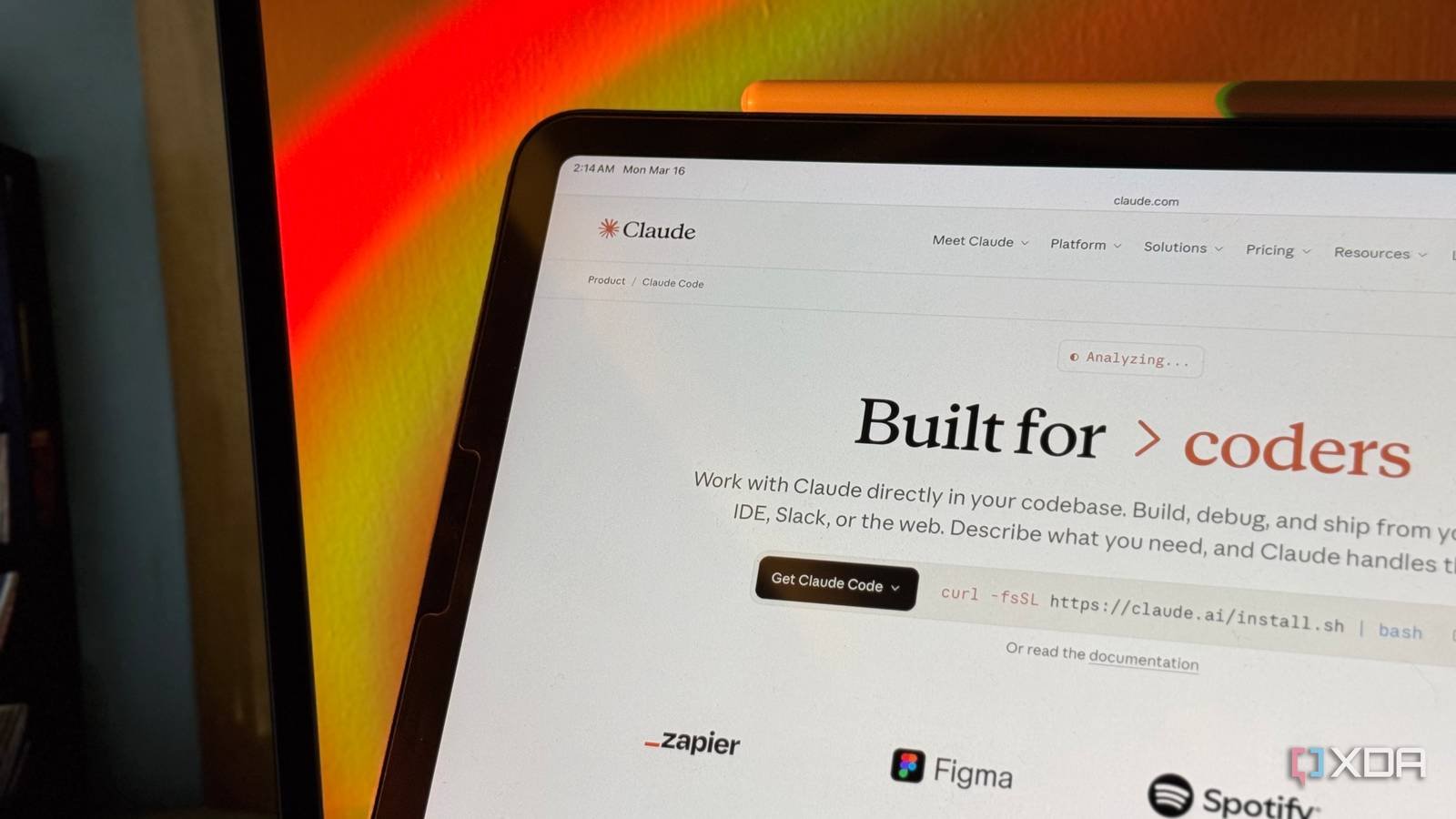

The good people here at XDA have been fall in love with claudeand we’ve been looking for ways to activate it better. Giving Claude instructions is a great way to get it to work the way you want, and while they often require some tweaking to get them to work the way you want, that’s part of the fun.

Someone on Reddit claimed that there are three commands that, when used, “dramatically” reduce hallucinations. The best part is that these are actually listed in the Anthropic documents; It’s just that no one had noticed them until now.

A Reddit user would have found the ingredients to have fewer hallucinations

And they were under our noses the entire time.

Beyond the Close AI subreddituser ColdPlankton9273 stumbled upon a document on Claude API Docs. Titled “Reduce hallucinations“, the document details some simple ways you can make Claude hallucinate less and give better results.

It’s worth checking out the Reddit page and post for more details on how they work, but the main ideas are:

“Let Claude say I don’t know.”

“Verify with quotes”

“Use direct quotes to support facts”

ColdPlankton9273 tested these commands and while they supposedly worked, it requires a bit more tweaking if you intend to use Claude for other tasks (and yes, it is good for more than just coding):

However, there is a trade-off. One paper (arXiv 2307.02185) found that citation restrictions reduce creative output. So I don’t run them all the time. I created a switch: investigation mode activates all three, default mode allows Claude to think freely.

One Redditor, Mean_Smell_6469, claims that they use Claude as a customer support agent. Before, they claim that “Claude was confidently answering questions that weren’t in the FAQ at all, just plausible-sounding fiction,” but once they added the instructions “”Allow Claude to say I don’t know” + explicit instructions to answer only from the FAQ provided,” Claude stopped making things up and started connecting people to the owner. As such, if you’ve had hallucination issues with Claude recently, these rules are worth trying out.