Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Large language models are finding limits in domains that require an understanding of the physical world, from robotics to autonomous driving and manufacturing. That restriction is pushing investors toward world modelswith AMI Labs raises $1.03 billion seed round shortly after World Labs obtained a billion dollars.

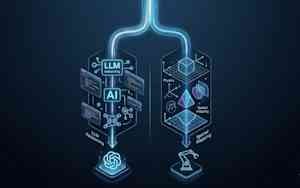

Large language models (LLMs) excel at processing abstract knowledge through next token prediction, but fundamentally lack a basis in physical causality. They cannot reliably predict the physical consequences of real-world actions.

AI researchers and thought leaders are increasingly vocal about these limitations as the industry attempts to take AI out of web browsers and into physical spaces. In an interview with podcaster Dwarkesh PatelTuring Award winner Richard Sutton warned that LLMs simply imitate what people say rather than modeling the world, limiting their ability to learn from experience and adapt to changes in the world.

This is why LLM-based models, including vision-language models (VLM), can show brittle behavior and break with very small changes in their inputs.

CEO of Google DeepMind Demis Hassabis He echoed this sentiment in another interview, noting that current AI models suffer from “spotty intelligence.” They can solve complex math olympiads, but fail in basic physics because they lack critical skills regarding real-world dynamics.

To solve this problem, researchers are focusing on building world models that act as internal simulators, allowing AI systems to safely test hypotheses before taking physical actions. However, “world models” is a general term that encompasses several different architectural approaches.

This has produced three distinct architectural approaches, each with different trade-offs.

The first major approach focuses on learning latent representations rather than trying to predict the dynamics of the world at the pixel level. Backed by AMI Labs, this method relies heavily on Predictive Joint Integration Architecture (JEPA).

JEPA models attempt to mimic how humans understand the world. When we observe the world, we don’t memorize every pixel or irrelevant detail of a scene. For example, if you observe a car driving down a street, you follow its trajectory and speed; The exact reflection of light on each leaf of the trees in the background is not calculated.

JEPA models reproduce this human cognitive shortcut. Instead of forcing the neural network to predict exactly what the next frame of a video will look like, the model learns a smaller set of abstract or “latent” features. It discards irrelevant details and focuses entirely on the basic rules of how scene elements interact. This makes the model robust to background noise and small changes that break down other models.

This architecture is highly efficient in computing and memory. By ignoring irrelevant details, it requires far fewer training examples and runs with significantly lower latency. These features make it suitable for applications where efficiency and real-time inference are non-negotiable, such as robotics, autonomous vehicles, and high-risk enterprise workflows.

For example, AMI is partnering with healthcare company Nabla to use this architecture to simulate operational complexity and reduce cognitive load in fast-paced healthcare environments.

Yann LeCun, pioneer of JEPA architecture and co-founder of AMI, explained that JEPA-based global models are designed to be "Controllable in the sense that you can give them goals and, by construction, all they can do is achieve those goals." in an interview with Newsweek.

A second approach relies on generative models to build entire spatial environments from scratch. Adopted by companies such as World laboratoriesThis method takes an initial message (could be an image or a textual description) and uses a generative model to create a 3D Gaussian symbol. A Gaussian symbol is a technique for representing 3D scenes using millions of small mathematical particles that define geometry and lighting. Unlike flat video generation, these 3D representations can be imported directly into standard 3D and physics engines, such as Unreal Engine, where users and other AI agents can freely navigate and interact with them from any angle.

The main benefit here is a dramatic reduction in the one-time build time and cost required to create complex interactive 3D environments. It addresses the exact problem described by World Labs founder Fei-Fei Li, who noted that LLMs are ultimately like “word makers in the dark“It has a flowery language but lacks spatial intelligence and physical expertise. World Labs’ Marble model gives the AI that spatial awareness it lacks.

While this approach is not designed for split-second real-time execution, it has enormous potential for spatial computing, interactive entertainment, industrial design, and building static training environments for robotics. The business value is evident in the Autodesk model. Strong support from World Labs Integrate these models into your industrial design applications.

The third approach uses an end-to-end generative model to process user cues and actions, continuously generating the scene, physical dynamics, and reactions on the fly. Instead of exporting a static 3D file to an external physics engine, the model itself acts as the engine. It ingests an initial message along with a continuous stream of user actions and renders subsequent frames of the environment in real time, natively calculating physics, lighting, and object reactions.

deep mind genie 3 and from nvidia Cosmos They fall into this category. These models provide a very simple interface to generate infinite interactive experiences and massive volumes of synthetic data. DeepMind demonstrated this natively with Genie 3which shows how the model maintains strict object permanence and consistent physics at 24 frames per second without relying on a separate memory module.

This approach translates directly into powerful synthetic data factories. Nvidia Cosmos uses this architecture to scale synthetic data and AI physical reasoning, enabling robotics and autonomous vehicle developers to synthesize rare and dangerous extreme conditions without the cost or risk of physical testing. Waymo (an Alphabet subsidiary) built its world model on Genie 3, adapting it to train their autonomous vehicles.

The disadvantage of this end-to-end generative method is the large computational cost required to continuously render physics and pixels simultaneously. Still, the investment is necessary to achieve the vision laid out by Hassabis, who argues that a deep internal understanding of physical causality is required because current AI lacks critical capabilities to operate safely in the real world.

LLMs will continue to serve as an interface for reasoning and communication, but world models are being positioned as critical infrastructure for physical and spatial data pipelines. As the underlying models mature, we are seeing the emergence of hybrid architectures that leverage the strengths of each approach.

For example, cybersecurity startup DeepTempo recently developed LMRegistrationa model that integrates elements of LLM and JEPA to detect anomalies and cyber threats from security and network logs.