For years now, the GPU conversation has revolved around VRAM, and rightly so. 6GB became 8GB and 8GB became the new minimum, which suddenly started to feel like it wasn’t enough. Modern games have exploded in size, textures have become much sharper, and memory demands have clearly gotten out of control.

At GTC 2026, Nvidia didn’t respond as most people expected. For starters, we also didn’t get any new RTX 50-series Super cards or larger memory pools across the board. Instead, they have introduced a way to I need less VRAM now. Neural texture compression is a new approach to traditional texturing in games, and if it sticks around, it could change what “enough VRAM” means.

Nvidia’s NTC is a new approach to texturing your games

Stored data is being transformed into reconstructed details

Traditional texturing has always been brute force disguised as efficiency. Every surface you encounter in any game is essentially just an image wrapped around a 3D object in the engine. Your GPU then processes them in real time, meaning that large amounts of pre-computed image data are stored directly in VRAM. Each surface is a stack of texture map layers that scale aggressively with resolution. That’s why higher quality images have also led to higher VRAM usage, and why 8GB of VRAM runs out quickly. starting to feel very little.

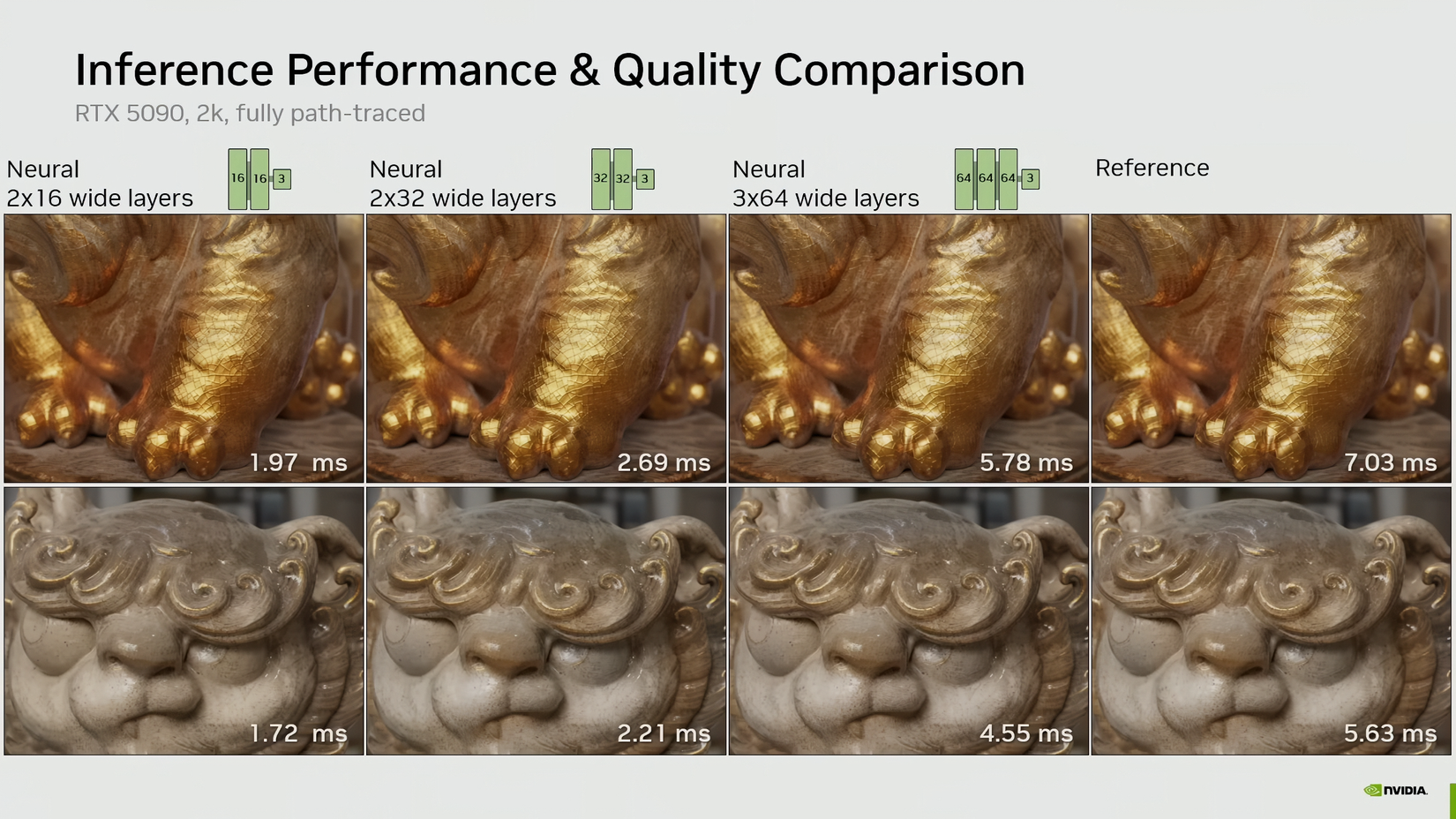

Nvidia’s neural texture compression technique completely reverses this model. Instead of storing textures, it stores a compact neural representation of the texture: a small model that reconstructs the texture when necessary, using Tensor Cores. The biggest result of this technology is a dramatic reduction in memory footprint. Nvidia’s own demos show texture data requiring over 6GB of VRAM and coming down to less than a gigabyte (970MB) without the traditional overhead you’d expect. Neural texture compression converts static texture assets into instructions that are reconstructed in real time.

Consequently, VRAM is no longer the bottleneck it currently is. However, it is not eliminated (other rendering techniques such as lighting and geometry still consume a GPU’s VRAM resources), but it is significantly less dominant.

NTC could become an excuse for 8GB GPUs to stay

There is a less comfortable angle to all this.

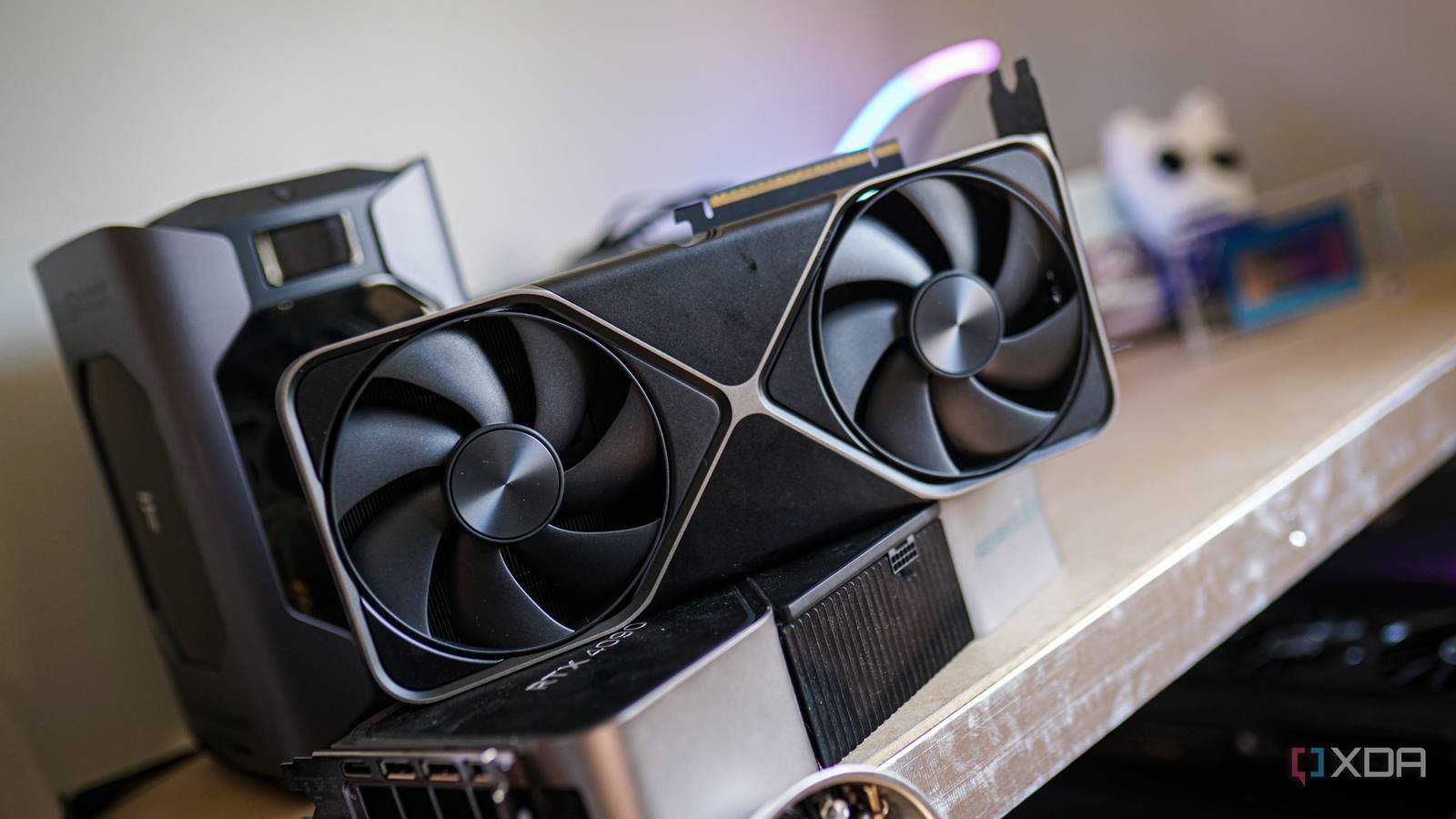

For years, users have been pushing back against 8GB GPUsespecially as newer titles started to exceed that limit at higher settings. And yet, we’ve seen cards even in the latest RTX 50 series with 8GB configurations, such as the RTX 5060 and also its 8GB Ti variant. Instead, these cards rely heavily on technologies like multi-frame rendering to remain relevant. Now, with NTC, Nvidia has something that is even stronger than marketing, and that is a technical justification.

If neuronal texture compression reduces VRAM usage By the margins they claim, then 8GB will no longer seem so restrictive on paper. This would give Nvidia room to keep lower-capacity variants in circulation, potentially extending that trend to the RTX 60 series as well. And yet, frustration on the part of gamers and other consumers hasn’t gone away. We still run into memory limits in real games today. While NTC may point to a better future, it doesn’t actually solve the current limitations while running. games like Borderlands 4, Hogwarts Legacyeither Avatar: Pandora’s Borders. When you look at it this way, NTC is starting to look more and more like a way for Nvidia to redefine the VRAM problem rather than fix it entirely.

Your old 8 GB GPU will not have a second life

RTX 20 and 30 series will not fully benefit from NTC

Unfortunately, if you’re still hanging on to an old 8GB card, NTC isn’t the miracle upgrade it seems. Neural texture compression comes in multiple implementation paths and not all offer the same benefits. The most efficient mode, which performs real-time reconstruction during rendering and offers the greatest VRAM savings, is called In-Sample Inference. Despite the benefits of using VRAM, this method also demands significant AI computing performance, the kind that newer architectures are much better equipped to handle.

Older GPUs with fewer Tensor Cores, such as RTX 20 and 30 seriesthey are more likely to turn to overload inference methods, where textures are reconstructed in advance and then stored in more traditional compressed formats. While this reduces bandwidth and transmission overhead, it does not significantly reduce VRAM usage like Inference-on-Sample does. So while NTC could technically run on older hardware, it won’t transform it.

Then there’s the fact that older cards playing current games won’t find any significant benefit from neural texture compression. This is a new technology that developers must manually integrate into their pipelines. That means we’ll see the benefits of NTC only in future games, or in the few games that can be updated to implement NTC instead of traditional textures. So NTC won’t suddenly revive GPUs that are already struggling in modern titles. Instead, with this technology, Nvidia is just laying the groundwork for what comes next.

AI appears to be replacing traditional graphics channels

Nvidia loves finding solutions to traditional problems

In recent years, what we have seen Nvidia do in terms of graphics rendering is a clear sign that AI is actively and rapidly replacing traditional channels. DLSS uses deep learning and neural networks to improve lower resolution graphicsand frame generation uses AI models to create and insert AI generated frames also. NTC also uses machine learning (small, specifically trained neural networks) to reconstruct texture data in real time. Individually, these are all smart optimizations, and together they point to a bigger picture, showing how Nvidia is no longer interested in overcoming the challenges posed by traditional rendering. Instead, the Green Team is determined to get around them.

While we once relied on raw raster performance, bandwidth, and memory capacity, we are now leaning toward AI-powered reconstruction at almost every stage of the process. Resolution, motion, lighting, and textures are increasingly becoming results of inference rather than direct calculation or storage, and it all points to a fundamentally different philosophy for games of the future. If this direction holds, the future of graphics will be defined less by how much raw data you can store or process, and more by how convincingly you can recreate it on the fly.

This is the age of algorithmic witchcraft Raw specifications are clearly becoming secondary to algorithmic wizardry.

The only thing that has become clear in recent years is that we are approaching the end of the era of “brute force.” We’re now moving toward a reality where our GPUs act less like high-speed warehouses and more like a computational machine that paints a masterpiece from a handful of notes.

Whether you find this pivot brilliant or see it as a cynical avoidance of hardware limitations, the trajectory couldn’t be clearer: raw specifications are now becoming secondary to algorithmic wizardry. The most powerful graphics card will not be the one with the most memory. Instead, it will be the one with the smartest software stack behind it.