Joe Maring / Android Authority

AI. AI. AI. I’m sure you’re tired of hearing this word for the past few years. It’s everywhere, in every feature, in every app, in every home slide, whether it actually improves your phone or not. And honestly, I’ve felt the same. Sure, some features are pretty useful, but I think most AI features on Android are a gimmick that you try once and forget about.

One of those tricks, at least in my opinion, has always been AI on the device. Most of our phones’ AI features still rely on the cloud (or hybrid architecture), and I’ve long believed that smartphones simply can’t match the processing power of AI data centers. That’s why on-device AI has never felt more useful, at least not to the extent that companies claim it is.

However, that was the case until I tried one of Google’s lesser-known on-device AI apps, and it actually made me reconsider that stance a bit.

Would you really use AI features that work completely offline on your phone?

42 votes

Google AI Edge Gallery is the hidden gem that you didn’t know about

Sanuj Bhatia / Android Authority

Google’s AI Edge Gallery app isn’t exactly new. Actually released about a year ago as an experimental application, but what recently attracted attention again is that Google has I updated it to support Gemma 4.its latest and greatest open source AI models. That update is what finally made me give it a proper chance.

The app is available on both Android and iOS. I have tried it on myself iphone airmy Google Pixel 10 Pro and even the Oppo Find X9 Ultra. There are some differences between platforms, but the core idea remains the same. You download these open source AI models directly to your device and then use them for different tasks.

There are plenty of predefined use cases in the app, such as using a general chatbot, transcribing audio, asking questions about an image, or even trying out some agent-style tasks. I always thought that on-device AI models, especially with limited parameters, wouldn’t be that useful in real life. But that opinion changed a bit during a recent trip, where I found the tool surprisingly useful.

Ask questions as you go

Sanuj Bhatia / Android Authority

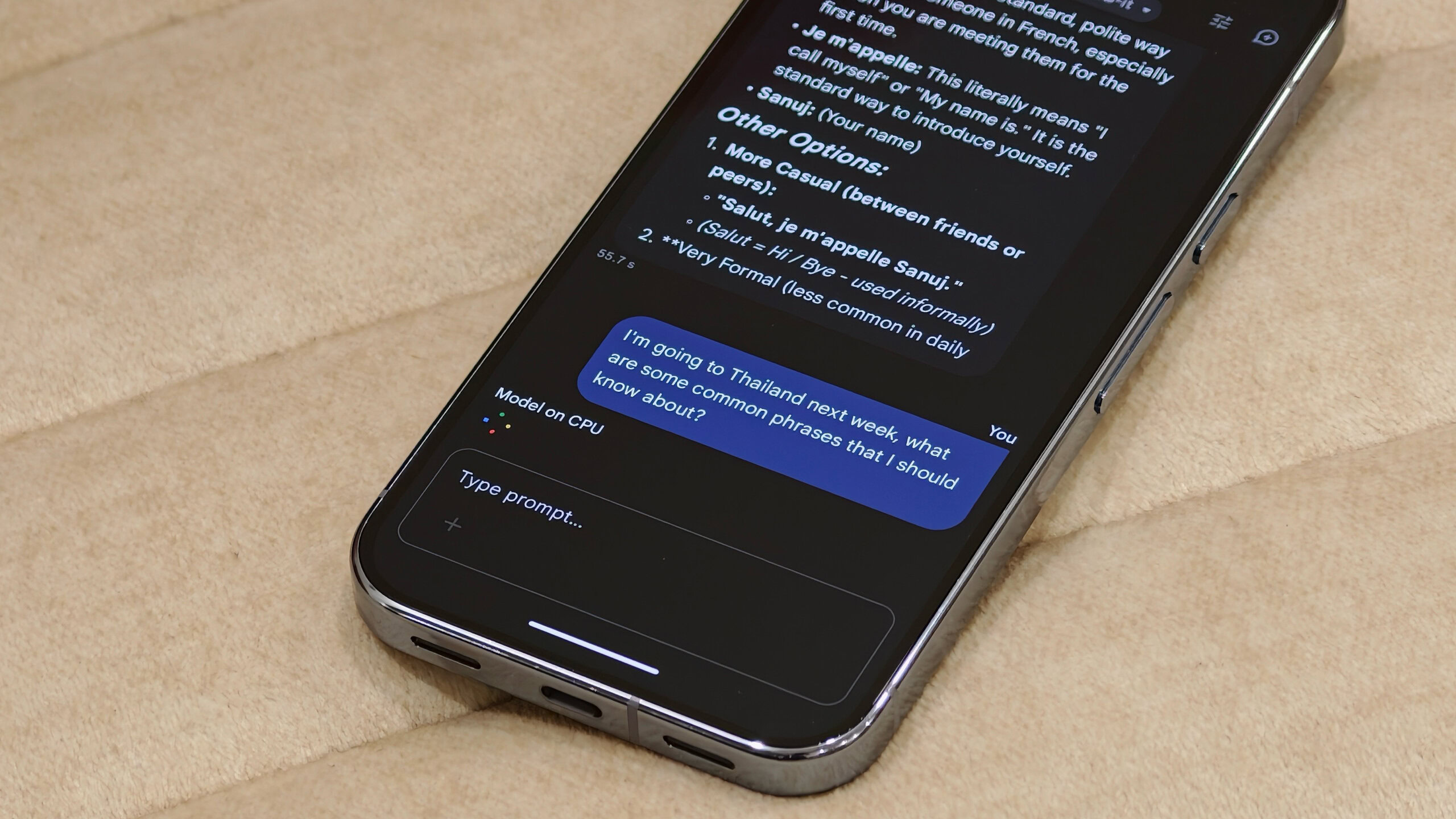

The best use case, and I know it’s still not perfect, is to have access to an AI chatbot on-device and offline. The Google AI Edge Gallery app gives you access to AI Chat, which is essentially similar to other chatbots like Gemini.

It’s pretty simple. Enter a message and wait for the model to respond. It’s multimodal, so you can use text, voice, or even images, and it puts all of that into context. It’s definitely slower than something like ChatGPT or Gemini, but the fact that it works entirely on the device without internet is what makes it stand out.

Using AI on my phone at 32,000 feet without internet is what finally made on-device AI seem real.

I recently used it on a flight to Thailand, where I asked it basic things like phrases I should know and even asked about some movie titles from the in-flight system to get recommendations and approximate IMDb ratings.

You mentioned that you can’t access the internet and rely on your training data, but if you ask your questions accordingly, it will still give you useful answers when you’re completely offline.

Offline translation

Sanuj Bhatia / Android Authority

This is probably the best use case for this application. I usually travel with an eSIM when I’m abroad, but there are always places where the connectivity is not good, and that’s where this really helped me. Thanks to Gemma 4’s multimodal capabilities, you can use the AI Edge Gallery app as a proper offline translator.

The app includes a dedicated audio writing tool that can transcribe speech and also translate it on the fly. On phones that can use the hardware properly, translation is surprisingly fast, almost as fast as dedicated translation apps, and works reliably even without the Internet.

ask image

Sanuj Bhatia / Android Authority

Another feature that builds on this is the ability to ask questions about images using the offline model. You can attach a photo and ask anything about it. I didn’t think I’d use it much, but it turned out to be very useful while traveling, especially for translating menus or understanding signs in different languages.

Plus, it also helps save my valuable mobile data since everything happens on the device and you’re not uploading images to a server waiting for a response.

There are a few things that the AI Edge Gallery Android app needs to fix

Overall, I’ve enjoyed using the Google AI Edge Gallery app as an offline AI tool, but it’s not without its drawbacks. My biggest complaint is that chats are not saved.

For example, with something like Gemini or ChatGPT, each conversation is saved as a thread, so you can come back to it and pick up where you left off. I understand that offline models have limitations on context length due to hardware limitations, but there should at least be an option to continue a conversation until that limit is reached.

My biggest problem, however, is that Google still doesn’t fully utilize the Android hardware. The AI Edge Gallery app is available on both Android and iOS and I have tested it on several devices. On iPhones, the app uses the GPU for processing, which is generally much faster for AI tasks.

On Android, it’s a mixed experience. Phones with high-end chips like Snapdragon 8 Elite Generation 5 You can access the GPU and run the application much more efficiently. But on Google’s Pixel 10 Pro, the app doesn’t seem to fully utilize the Tensor GPU or even the chip’s NPU.

The app mentions that the AICore-based experience, which can take advantage of the NPU, is currently limited to beta testers. For users like me who aren’t part of that program, it falls back on CPU processing instead of taking advantage of on-device AI models like the Gemini Nano, making it noticeably slower.

Robert Triggs / Android Authority

To put this in perspective, on the same audio input, my iPhone Air took less than a second to respond, while the Pixel 10 Pro took over 10 seconds to perform the same task. That kind of gap really affects the overall experience.

I’m sure I’m in the minority here, talking about an on-device AI app that has limited capabilities and, let’s be honest, a pretty niche user base. But considering how much Google has been pushing on-device AI, it’s a little frustrating to see it not fully utilizing its own Tensor hardware in this app. It seems like a bug and I really hope Google fixes it as soon as possible.

That said, being able to access a Gemini model right on my phone and get answers at 32,000 feet has been eye-opening for me. This is probably the first time I’ve seen a real, practical use case for on-device AI.

Thank you for being part of our community. Read our Comment Policy before publishing.