Bottom line: Nvidia CEO Jensen Huang warned on the Dwarkesh Podcast that DeepSeek optimizing its AI models for Huawei’s Ascend chips instead of American hardware would be “a horrible outcome” for the United States, as the Chinese AI lab prepares to launch its entry-level V4 model on Huawei’s Ascend 950PR processor. Nvidia’s CUDA migration to Huawei’s CANN framework threatens to break the software-hardware dependency that underpins American AI dominance, even as US lawmakers push to place DeepSeek on the export control entity list.

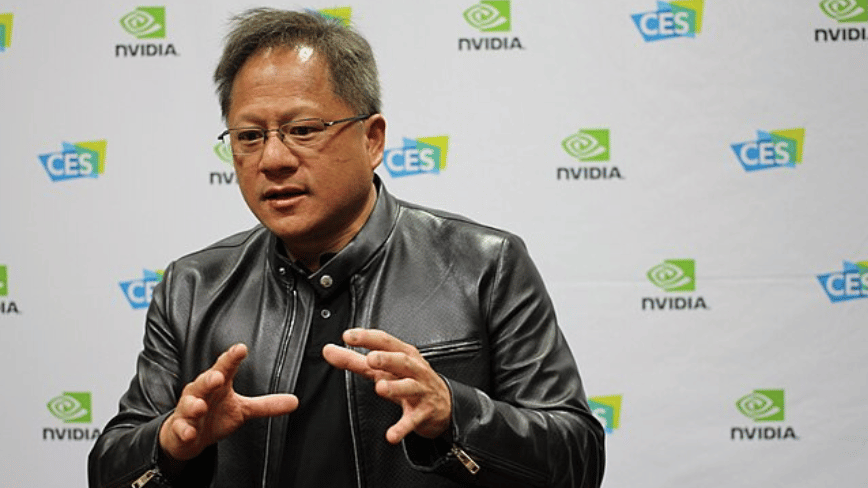

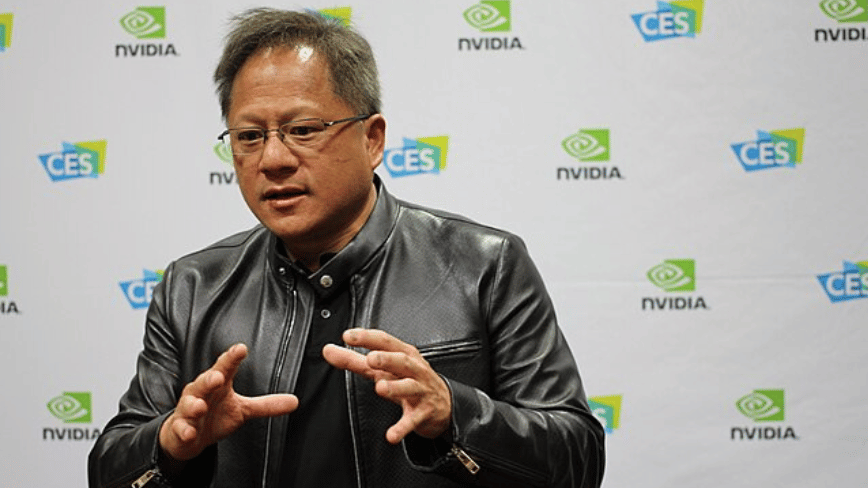

Nvidia CEO Jensen Huang said on the Dwarkesh Podcast on Wednesday that if DeepSeek optimized its new AI models to run on Huawei chips instead of American hardware, it would be “a horrible outcome” for the United States. The warning frames the emerging partnership between China’s most capable AI lab and its most advanced chipmaker as a direct threat to the technological leverage that has underpinned American dominance of AI for the past decade.

“If future AI models are optimized in a very different way than the US technology stack”Huang said, and as ““AI is spreading to the rest of the world” with Chinese standards and technology, China”will be greater thanThe US statement is notable because it comes from the CEO of the company that has benefited the most from the current deal, in which virtually every cutting-edge AI model in the world is trained on Nvidia GPUs using Nvidia’s CUDA software framework.

What DeepSeek is building

DeepSeek is preparing to launch V4, a basic multi-modal model expected later this month. The Information reported in early April that V4 would run on Huawei’s latest Ascend 950PR processor, while a separate Reuters report suggested the model had been trained on Nvidia’s Blackwell chips, which would constitute a violation of US export controls. The two statements are not necessarily contradictory: a model can be trained on one set of hardware and deployed to perform inference on another.

What makes the Huawei integration significant is the software migration behind it. DeepSeek has spent months rewriting its core code to work with Huawei’s CANN framework, moving away from the CUDA ecosystem that Nvidia has spent two decades building as the foundation of AI development. CUDA dominance has functioned as a second layer of American control over AI, beyond the chips themselves. Export restrictions may limit what Nvidia hardware comes to China, but while Chinese labs wrote their software for CUDA, they continued to rely on Nvidia’s ecosystem even when using alternative processors. DeepSeek’s move to CANN breaks that dependency.

DeepSeek’s V3 model, released in late 2024, was trained on 2,048 Nvidia H800 GPUs, a chip tailor-made for the Chinese market whose sale in China was banned in 2023. The company has already shown that it can produce frontier competitive models with fewer resources than its American rivals. Their R1 reasoning model matched or outperformed models that cost orders of magnitude more to train. V4 would extend that approach by showing that the company can do it without any American hardware.

The hardware gap and why it may not matter

In terms of raw performance, Huawei’s chips are not competitive with Nvidia’s best. The Ascend 910C, the predecessor to the 950PR, offers about 60% of the inference performance of Nvidia’s H100, a chip that is two generations behind Nvidia’s current best performance. American chips are about five times more powerful than their Chinese equivalents today, and that gap is projected to widen to 17 times by 2027. Huawei aims to make 750,000 shipments of AI chips in 2026, but its total production represents only 3 to 5% of Nvidia’s aggregate computing power.

But Huang’s concern is not the current performance gap. He said on the podcast that even if China had inferior chips, it could still catch up with the United States in AI development given its “abundant energy” and “a large group of AI researchers.” The implication is that raw hardware performance is only one variable, and that software optimization, researcher talent, and power availability can offset the disadvantages of silicon. If V4 works well on Ascend chips, it validates an alternative path for AI development that is not dependent on Nvidia at any point in the supply chain.

The export control paradox

The situation exposes a tension at the center of US chip export policy. Nvidia restarted production of the H200, a more powerful chip, for sale in China, as Huang confirmed in March. But China has been blocking H200 imports to protect Huawei’s domestic chip business, and Nvidia’s chief financial officer has said the company has not recorded revenue from H200 sales in China. Controls designed to limit China’s AI capabilities are instead accelerating the development of a Chinese alternative.

DeepSeek’s experience with its R2 model illustrates both the promise and limits of Huawei’s path. R2 was repeatedly delayed due to training failures in Huawei hardware. Chinese authorities had urged DeepSeek to train on domestic chips, but the company encountered stability issues that forced it to revert to Nvidia GPUs for training while using Huawei chips only for inference. The distinction is important: Training is the most compute-intensive phase of AI development, and the fact that Huawei’s chips couldn’t reliably handle it suggests the hardware gap is real. But inference, the phase in which models serve users, is where business value is generated, and Huawei’s chips seem well suited for that purpose.

Meanwhile, US lawmakers are pushing to tighten restrictions further. On Thursday, lawmakers and experts accused China of buying “what they can“and stealing”what they can’t”in the artificial intelligence industry, and called on the government to consider placing DeepSeek, Moonshot AI and MiniMax on the list of entities for export control.

What Huang really warns about

Huang’s warning ultimately concerns the co-design of software and hardware. Nvidia’s dominance is not just based on making the fastest chips, but also on CUDA’s position as the default development environment for AI. When researchers write code, they write for CUDA. When startups build products, they build them in CUDA. When governments invest in AI infrastructure, they buy Nvidia GPUs because that’s what the software requires. DeepSeek’s migration to CANN threatens to create a parallel ecosystem where none of that applies.

He scale of Nvidia’s business specify what is at stake. The company’s market capitalization exceeds $3 trillion. Its data center revenue grew 93% year over year in its most recent quarter. Its chips power training for virtually all major AI models outside of China. If the most capable Chinese AI lab proves that competitive models can be built without Nvidia, the case for maintaining export controls weakens, the case for buying Nvidia weakens, and the geopolitical assumptions who have shaped AI policy for the past three years are under pressure.

None of this means Huawei is about to overtake Nvidia. The achievement gap is large and growing. R2 training failures show that Chinese hardware is not yet ready for the most demanding AI workloads. But Huang does not warn about today. He warns of a trajectory in which DeepSeek proves the concept, other labs follow, and the CUDA moat that has made Nvidia the most successful. valuable company in the AI supply chain begins to erode.

The fact that Nvidia’s CEO is the one making this argument publicly suggests that he believes the risk is no longer theoretical. DeepSeek V4 will be the first major test. If a basic multimodal model works competitively with Huawei’s silicon, the warning Huang issued Wednesday will look less like corporate lobbying and more like the most important forecast in the AI chip war so far.