What you need to know

- Google announced Gemini 3.1 Flash Live, an update to Gemini Live and Search Live that brings more natural, low-latency voice assistance to AI.

- This version of the AI is lightweight, meaning Google has sped up its response times and given it a larger context window to maintain its assistance.

- The company highlights notable improvements over the Gemini 2.5 Flash Native model, which first debuted in December.

Google’s iterations of Gemini never stop, and this week is no different, with the launch of a new, lightweight, low-latency model.

The company detailed what users can wait of Gemini 3.1 Flash Live, its “highest quality voice and audio model” to date. Google claims this new version of Gemini is part of its “voice-first AI” ambitions to achieve “natural speed and rhythm.” If you’ve been keeping up with Gemini, you can probably guess where it’s headed (hint: Gemini Live). The announcement release indicates that Gemini 3.1 Flash Lite is heading to Gemini Live and Search live to assist with all voice-based queries.

With this addition, Google showed “more useful and natural responses” as a key highlight. He adds that v3.1 is capable of providing support for everyday questions and more complex topics. Since “Flash” is in the title, 3.1 Flash Live is designed to offer much faster responses than users experienced before. What’s more, “it can follow the thread of your conversation for twice as long.”

Article continues below.

While you’ve skipped your Duolingo lessons (or Google Translate Practices), Gemini no. Google claims that the AI is “multilingual, meaning it is possible to get real-time responses in your preferred language.”

Gemini 3.1 Flash Live reportedly scored quite high in benchmark tests, which benefits developers and businesses. On the technical side, Google highlights the “enhanced tonal” capabilities of AI, as well as the ability to recognize “acoustic nuances,” such as pitch.

Your voice comes first

The developers are getting a little moreAs Google claims, they can create conversational agents that help in real time. Available through Gemini API and AI Studio, developers are finding higher task completion rates in “noisy” environments. It’s not just AI’s ability to deliver better appropriate responses in live conversations, but also improvements that separate a person’s speech from loud traffic noise.

The AI has also been given improvements in its instruction-following capabilities. Google states: “Your agent will stay within your operational barriers, even when conversations take unexpected turns.” This is in addition to other previously mentioned updates in Gemini 3.1 Flash Live, such as its multilingual capabilities and low latency.

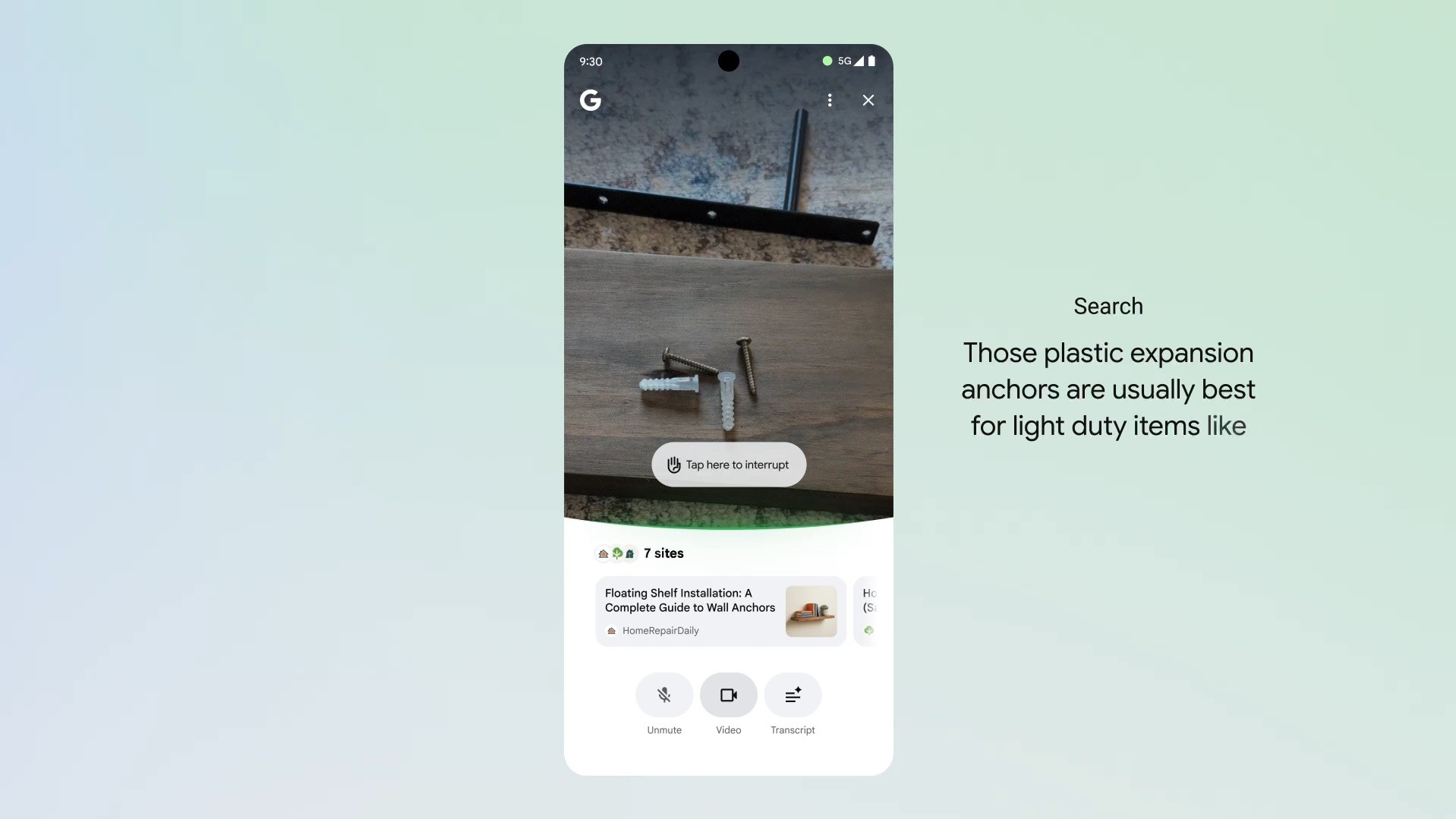

As Google pushes the voice-based side of Gemini Live, there was an update that brought it into the real world. to see What are you doing. Users can share their camera with Gemini, essentially allowing them to ask a question about what they’re looking at. Additionally, this update also includes a screen sharing feature, so if you’ve searched for something you’re not sure about, you can ask Gemini to give you the details.

Android Central’s opinion

An update like this seems like the obvious next step for Google. He’s doing it in a slightly different way than I would have expected. I thought it would have doubled down more on its camera feature or screen sharing aspect. But boosting its voice-based side isn’t so bad either. We’re talking about real-time support, so Gemini’s ability to understand the user as best it can is important. Nothing sucks more than having to repeat the same thing over and over again. literal computer.