Summary

-

The high to medium effort cut slowed Claude down; Anthropic reinstated high non-compliance on April 7.

-

A mistake eliminated the previous “thinking” in each turn, causing forgetting and repetition; Fixed April 10.

-

A system message of 25/100 words to reduce verbosity hurts coding quality; reverted on April 20.

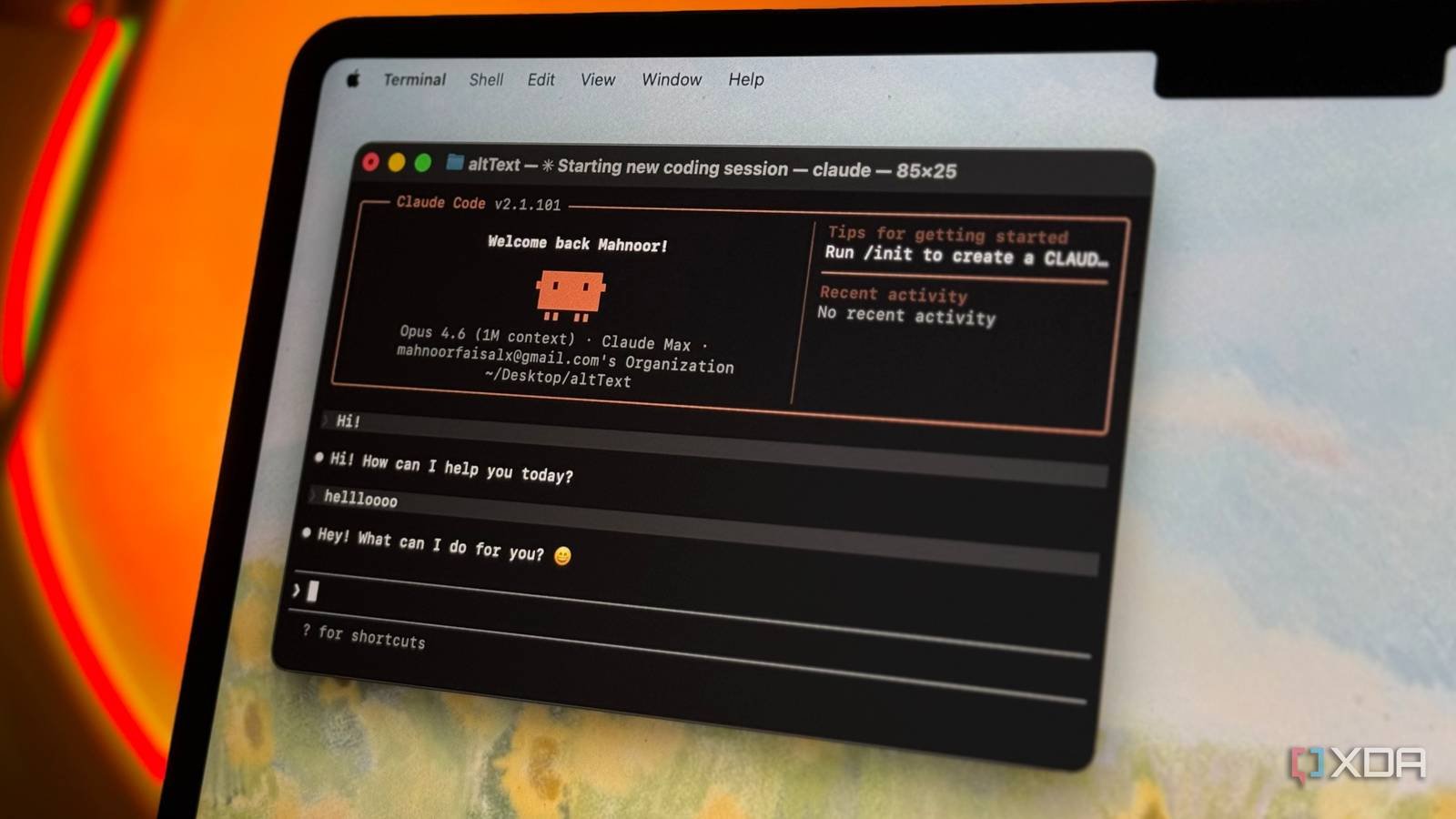

If you’ve been using Claude Code for the last month, you may have noticed that the quality of the agent degraded a bit. It’s easy to chalk it up to your imagination, but this time it’s not you; It’s Code Claude. Anthropic has published a blog post detailing three areas where Claude Code had degraded performance since March and how it fixed each of them.

Claude Code suffered three problems in the last month

It was a difficult period for the AI agent.

On Anthropic’s website, the company published a blog post titled “An update on the recent Claude Code quality reportsIn it, the company reveals three areas that affected Claude’s performance and caused a noticeable decline in his skills. The good news is that, as of April 20, all three weak points have been fixed, so Claude Code should be back on track now.

The first problem began on March 4, when Anthropic noticed that Claude Code’s default reasoning effort was causing large delays, to the point where people assumed the app had frozen. To remedy this, they changed the effort level from “high” to “medium”, which limited Claude Code’s capabilities. Anthropic reverted the change on April 7 after users told the company they would prefer to have “high” as the standard and reduce the effort value when necessary; If they want Claude Code to respond faster, they can always reduce the effort themselves.

The second appeared on March 26 in Sonnet 4.6 and Opus 4.6. Anthropic modified the system to remove Claude’s previous thought if a chat remained idle for more than an hour, which meant that replies used fewer tokens when the session resumed. Unfortunately, a bug caused this to happen after each At the same time, that is, Claude kept forgetting things and repeated phrases a lot. This was fixed on April 10.

Finally, on April 16, Anthropic says it “added a system prompt instruction to reduce verbosity.” Specifically, the message said this:

“Length limits: Keep text between tool calls to ≤25 words. Keep final answers to ≤100 words unless the task requires more detail.

The change didn’t mix well with other cueing adjustments, causing it to “damage encoding quality,” as Anthropic puts it. The problem was fixed on April 20.